AMDGPU-PRO OpenCL on Fedora and Debian

Since the open source AMD GPU Linux drivers are now quite good I swapped my GTX 970 from my old machine for a Vega 56 in the new Threadripper build. Unforuntely the kernel and mesa drivers do not support OpenCL, I tried ROCm for a while but only one build of version 1.2 would work with Fedora 30 and it would sometime casuse kernel panic when in use with Darktable. Not to mention some strange image artifacts when using certain modules.

AMD does have a proprietary closed source driver for Linux but it only supports a very small set of distributions and their checks on the installer are very strict. So there's no just running the installer script or installing the RPMs or DEBs. Plus there's the fact that I just need the OpenCL portions of the driver and not the display portions. You can continue to use the open source kernel drivers for display, OpenGL and Vulkan which is preferable as they outperform the proprietary AMD drivers. Just to reiterate: this is not necessary for 4D acceleration and gaming. The AMDGPU-PRO proprietary drivers are needed for OpenCL and compute only. If you're just looking to play games or run Steam the open source mesa implementation shipping in up-to-date distributions these days is more than good enough.

Fortunately there are just a few packages needed from the proprietary AMD driver for OpenCL to work and it is completely decoupled form the display driver. Start off by downloading the admgpu-pro package for the latest version of Ubuntu. This will work for Debian or Fedora as you're just going to be extracting the .deb packages anyway. You can do the same thing with RPMs and rpm2cpio but it's just a bit more troublesome. dpkg is available on Fedora anyway so it's no big deal.

At any rate you'll need the following packages:

libdrm-amdgpu-amdgpu

libdrm-amdgpu-common

opencl-amdgpu-pro-icd

opencl-orca-amdgpu-pro-icd

libopencl1-amdgpu-pro

The version numbers will vary depending on when you read this and download your package. First extract the tarball:

tar vxf amdgpu-pro-19.20-812932-ubuntu-18.04.tar.xz

Move the DEB packages out that you to a seperate directory

dpkg-deb -x opencllibdrm-amdgpu-amdgpu1_2.4.97-812932_amd64.deb opencl_root

dpkg-deb -x libdrm-amdgpu-common_1.0.0-812932_all.deb opencl_root

dpkg-deb -x opencl-amdgpu-pro-icd_19.20-812932_amd64.deb opencl_root

dpkg-deb -x opencl-orca-amdgpu-pro-icd_19.20-812932_amd64.deb opencl_root

dpkg-deb -x libopencl1-amdgpu-pro_19.20-812932_amd64.deb opencl_root

Change directory to where you extracted those files:

cd opencl_root

Copy the necessary files into place:

sudo cp etc/OpenCL/vendors/* /etc/OpenCL/vendors/

sudo cp -R opt/amdgpu* /opt/.

Now the dynamic linker needs to be updated so it knows where the libraries are located. In /etc/ld.so.conf.d/ create two files and put the following lines in them:

/etc/ld.so.conf.d/amdgpu-pro.conf:

/opt/amdgpu-pro/lib/x86_64-linux-gnu/

/etc/ld.so.conf.d/amdgpu.conf:

/opt/amdgpu/lib/x86_64-linux-gnu/

Then run ldconfig:

sudo ldconfig

Test and see if it works with clinfo -l, if it's working you'll see something like this:

clinfo -l

...

Platform #0: AMD Accelerated Parallel Processing

`-- Device #0: gfx900

Platform #1: Clover

`-- Device #0: Radeon RX Vega (VEGA10, DRM 3.30.0, 5.1.20-300.fc30.x86_64, LLVM 8.0.0)

...

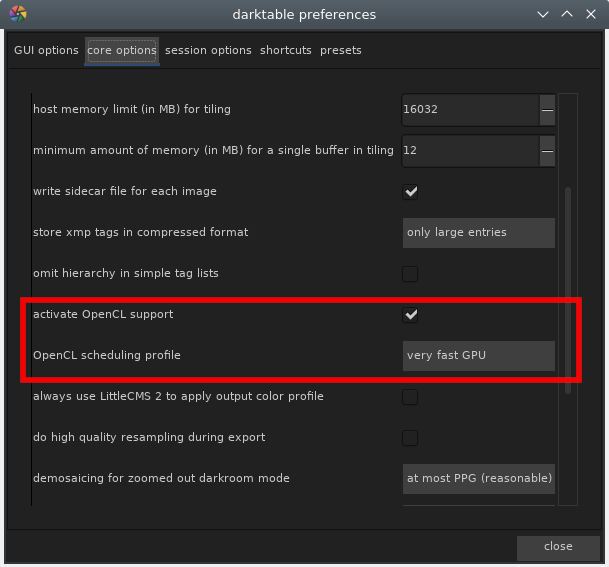

Darktable should also allow OpenCL now, you may need to delete the pre-compliled OpenCL kernels in ~/.caches/darktable if you were using ROCm before.

While this isn't the perfect option in terms of free software it's still preferable to nVidia's driver in my opinion. Hopefully ROCm will become more stable and will be packaged by Debian and Fedora in the near future, which seems likely given AMD has blessed ROCm as their future compute solution.

Linux Desktop Update

It's been a few years since I've become full time Linux user for my photo and media work flow. As we're now in the sixth month of 2019 I thought it'd be a good time to do a quick update and report on how things are going. When I decided to move off OS X for my photography and video work in 2015 the landscape was quite different. I was a seasoned Linux user and admin at the time but had kept Macs around for access to Adobe and Apple programs. But things change, the libre software options were getting better and I was mostly tired of giving Adobe money and to a lesser extent Apple. Keep in mind this was before Apple released the unreliable, throttling, terrible keyboard having and consumable current generation MacBook Pro. If I wasn't set on switching before then those things alone would have sealed the deal. Before then I was less hostile toward Apple's product line.

I still have a 2015 MacBook Pro in the house but I mostly use it for two things: my Canon Pro 100 printer and Epson V600 scanner. I have a what is now ancient copy of Photoshop CS6 and Lightroom 5.7 on it that haven't seen any use in a very long time either. My primary machines these days are an AMD Threadripper desktop and a Dell Precision laptop, both have only Fedora Linux installed on them. No dual booting, no Windows 10 virtual machines, no “cheating” if you could call it that. Honestly don’t think I could be all that useful in a Windows environment these days.

Just laying my cards here on the table from the beginning so no one see a Mac in my presence and thinks I’m making this all up.

Now then, in the intervening years the Linux desktop has improved dramatically. KDE Plasma has become my go to desktop environment for two main reasons. First of all when I picked up the Precision I needed something that did HiDPi scaling reasonably well. This forced me off XFCE and got me comparing GNOME3 and KDE Plasma 5 desktop environments. I know a lot of people like i3 Gaps or MATE or whatever but IMO there's a lot of good reasons to stick with the major players if you're looking to just get things done. Plus if I was going to show normal people how "full featured" the Linux desktop had become some sort of super tweaky desktop made for posting screenshots to /g/ or Reddit was out of the question. My wife should be able to pick up my machine and use with without much fuss. Plasma and GNOME look and act like modern desktops so that's the route I went. I ultimately decided on KDE Plasma 5 since it supported fractional scaling and my Precision looks best at 1.5-1.6x. GNOME3 on the other hand has a stinky foot for a mascot and could only do full integer scaling at the time. I think they've added the fractional scaling as a test feature in one of the latest releases however. Not to mention the difference in resource usage between the two. I really wanted to like GNOME3 since they did something actually brave and different with the desktop interface but it was lacking too many features, broke a lot and ate CPU and RAM like crazy. Plasma 5 on the other hand runs on everything from my super old Latitude E4200 with 3GB of RAM to my Threadripper with 64GB.

The KDE folks have really been hitting it out of the park the last few years with their releases of the Plasmas desktop, as long as you don’t need any accessibility features. GNOME3 is still the only desktop doing serious work on that front. The integrated applications for KDE are a different story too and tend to vary on quality. Gwenview has been great and Okular is the best PDF application out there today IMO. Konsole has become my favorite terminal emulator as well. However KMail is an unusable train wreck. I imagine the resources put into it aren't the greatest as most Linux users are probably going to use Thunderbird, mutt, alpine or a webmail interface.

On the distribution side of things I've mostly stuck to Fedora and Debian. I've been a long time Debian user as it covers a lot of use cases very well, is extremely stable and I'm rather fond of their governance structure. Plus it's dead simple to move from one release to the next. Fedora has made some significant strides in this direction lately and like their "cutting edge adjacent" strategy in terms of software versions. The last time I used Fedora for anything was the Fedora Core 4 through about the Fedora 8 days. After then it went through some shakey periods in terms of stability and usability but nowadays I'd say it's easier to get up and going with than Ubuntu. Fedora's KDE spin is quite nice as well, despite being known as "the GNOME distro." My go to machines for photo and video work are all running Fedora right now and I have a couple of desktop and laptops on Debian, all of my infrastructure stuff runs Debian too. IMO you can't really go wrong with either and if you want to get started with Linux I'd try Fedora, especially if you're a first time Linux user. I'm not a fan of derivative distributions and I've always found some of Ubuntu's choices to be strange, just skip those and go straight for Debian Stable IMO.

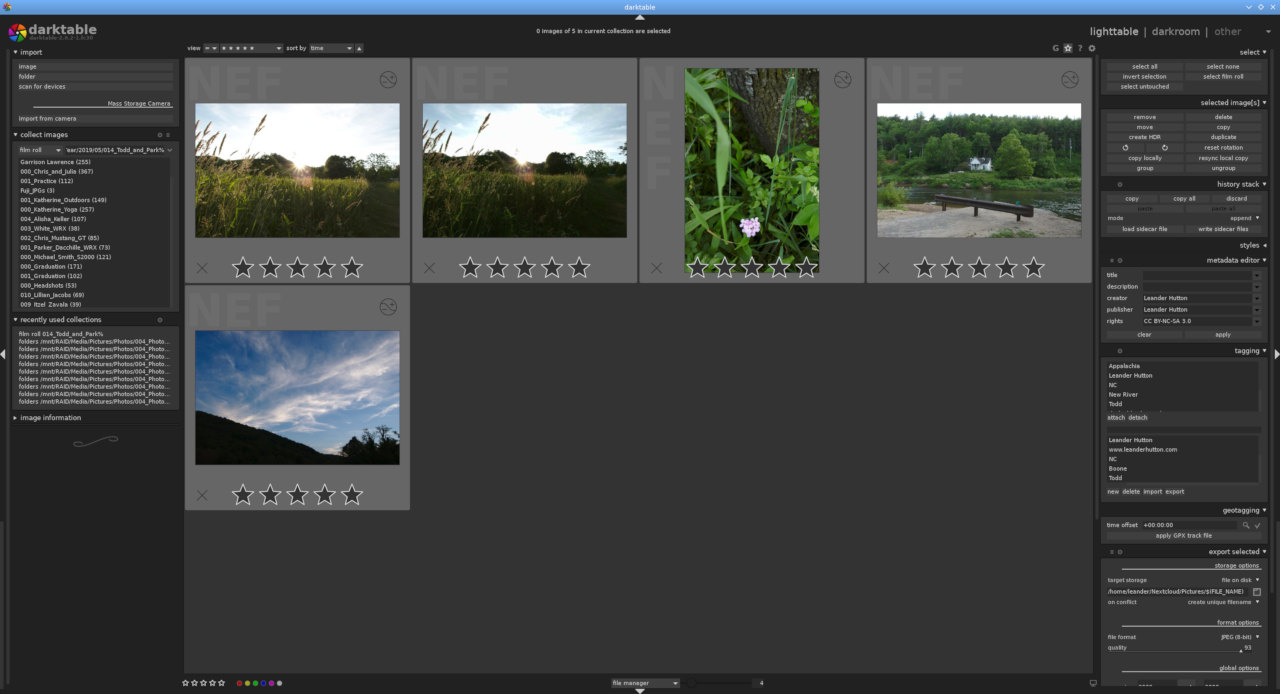

On the less day to day bread and butter desktop stuff and more image work flow side I'm continually impressed with Darktable. I don't miss Lightroom in the slightest. I started moving over to Fuji about the same time as I moved to Darktable and it has handled the RAW files nicely. From what I understand until recently Lightroom struggled with the RAF format and making effective use of X-trans, Iridient Developer seems to be more popular with Fuji users on Mac and Windows but I find Darktable’s RAF conversion to be quite fantastic. Indeed others seem to agree with my sentiment. Even if you’re stuck using a Mac or Windows machine I’d suggest trying out Darktable for Fuji RAW conversion. For the rest of the digital asset management workflow Darktable has been more than adequate, it does have more of a learning curve than Lightroom but it also allows for more under-the-hood exploration with modules like equalizer. I tend to be pretty self reliant on the organizing files front on my disks though and have heard other’s coming from things like Aperture and iPhoto saying Darktable doesn’t do enough on that front. The developers have stated time and again they aren’t writing a file manager and there are other better solutions out there for it. Personally I think it’s fine, but if you’re used to just dumping you files at a library management program and letting it handle the files on disk it will be an adjustment. Honestly I don’t like that approach as it locks your organizational structure into that piece of software and I’d rather just have the files available to me in a directory structure to move about as I see fit.

The GIMP has changed some since I first moved over, but in general it’s slower moving than most software package development cycles these days. There seems to be two camps for GIMP users: it’s adequate or it’s a piece of junk. Where you land largely seems to be dependent on what you’re trying to do and in my opinion photographers are better served in the GIMP than designers. Most people I see complaining about it lacking features are designers and print production types. Which is fair, GIMP does lack CMYK mode among other things and there is an adjustment to be made coming form Adobe land. For my needs it still gets the job done especially now that 16-bit and 32-bit images are supported in 2.10. Before then I was running the unstable testing branch as 2.8 only supported 8-bit images. If you only working with JPEGs this isn't a big deal. It only matters when you're working with TIFFs and RAW file derivatives. If all you need is minor retouching there's no reason to use Photoshop, unless you need a specific feature or work with others in a Adobe centric environment. But for most of us just working with ourselves out here GIMP seems to get the job done.

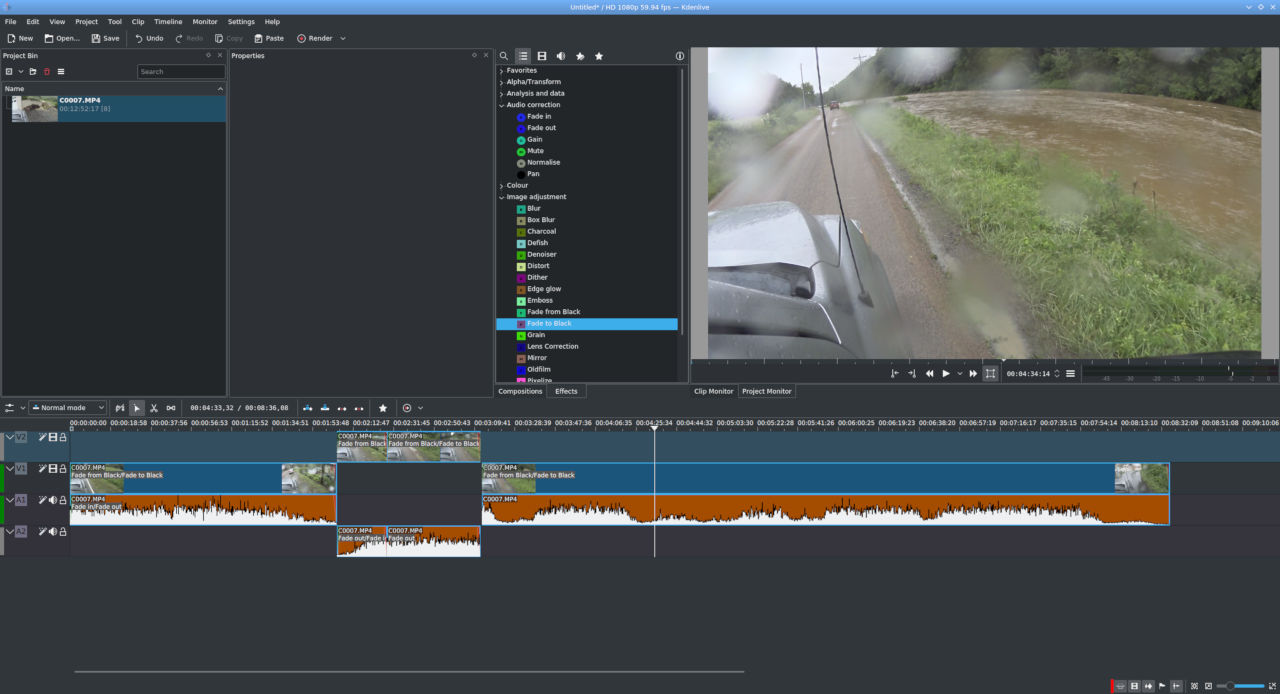

Video editing is something that has changed massively since 2015. When I first moved my media production efforts to Linux there wasn’t a good option for a non-linear editor so I dual booted and used Sony Vegas or just got out the MacBook Pro for iMovie, Final Cut or QuickTime. That is no longer the case. Kdenlive has made massive strives and recently did a huge refactoring release that squished a lot of long standing odd behaviors, but I’ve been using it since 2017 without many complaints. If you’re used to old-school iMovie and Final Cut the Kdenlive is pretty easy to move around in. I’ve heard some folks say it’s similar to Adobe Premiere as well.

I am still glad I made the complete switchover, even before this I was fine with Linux as a desktop operating system. However now I have moved my comfort zone with it and I’m no longer at the whims of a couple of rather controlling companies for the creative part of my life. I really don’t like feeding anti-competitive, monopolistic, end-user hostile companies trying to squeeze blood from a stone with monthly subscriptions or engineered to fail overly delicate status symbols.

I’ve also come to despise the term “industry standard” as it seems to just be a good excuse to not try to move outside the box you were taught to stay in. “You get what you pay for” is another terrible motto and seems to be used by these corporate types to put down libre software alternatives on a regular basis, but I’m off on a tangent at this point but I’d suggest trying some of these tools. There are even more applications I have not covered here like Krita and Raw Therapee, both of which I've been using lately as well. It really is about the best time it’s even been to jettison the likes of Adobe, Apple, Google or Microsoft these days. Depending on what exactly your need is and what products you wish to avoid. In the case of most of us just working on creative photo and video fields by ourselves or for ourselves it’s really just about overcoming the inertia that Adobe has in this space.

Even professionally it's more than possible, but like switching camera systems there is an initial time cost and you have to decide if the benefits are worth it. In my opinion the freedom is worth trouble and my work hasn't suffered for it. Just don't let others saying "oh, that's not for anyone doing serious work" discourage you. I just don't think that being on a corporate leash is the only way to get things done.

IBM Model F AT

Probably could use a little cleaning but this is the ultimate buckling spring board in my opinion and is finally in my possession. Capacitive PCB for a sensing assembly instead of a membrane like the Model M's buckling spring mechanism. Not that the Model M is bad, this just lot a smoother, more tactile and louder. Only mild irritation is the placement of ESC since I'm a vi user.

My apologies for the condition of the desktop itself. It's a fifteen year old big box store particle board desk and the veneer is really letting go. A future project will likely be building a new top for it or outright building a whole new desk.

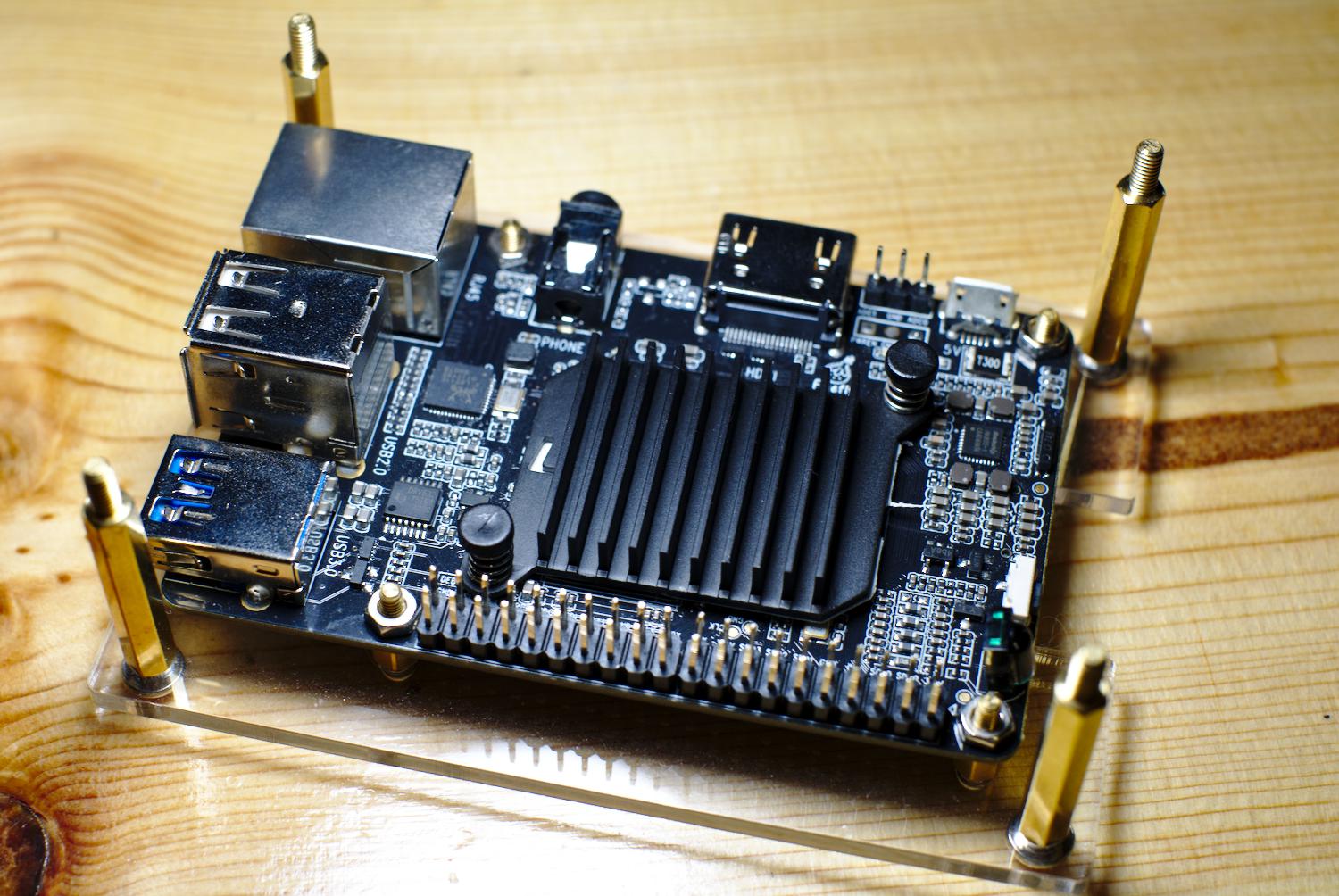

Debian Mirror on Libre Computer ROC-RK3328-CC

In the age of 100Mbps+ fiber internet connections it's hard to imagine why you'd need to mirror your OS packages locally. There are a couple of reasons, most of them go to being a good neighbor to the larger package mirrors and in my opinion it never hurts to have a locally acessible backup of the entire package tree in case things get weird and you lose internet connectivity for a while. Mostly I do it because between physical hosts and virtual machines I have quite a few Debian boxes floating around on different releases. Pointing them all at a local machine and just having the mirror grab the files saves the host on the other end a lot of bandwidth.

Small single board computers are great for this in theory. They take up little space and power but until recently that has come at the cost of performance and storage. I've never been terribly impressed with the Raspberry Pi and it's limited connectivity has made it less than ideal for this sort of task. Enter the Libre Computer ROC-RK3328-CC board. It shares the same form factor as the RPi B type boards (so it works with those cases) but has more RAM, a faster CPU, actual gigabit Ethernet, eMMC slot and a USB 3.0 port. It also uses less power than the RPi3B+. I went with the 4GB edition because why not but this could easily be done on the 2GB or 1GB version of the board if you want to save a little money. The killer features for this task is the gigabit and USB 3.0 port, you don't need 4GB of RAM to run a couple of cron scripts and nginx.

First you'll need an OS for you SBC. I'm currently running a self made Armbian but Libre Computer has Debian-based images as well. My Renegade board off a 32GB eMMC but it can be a bit tricky to flash one of those. The MicroSD slot should provide good enough performance to run the OS if you want to go that route for simplicity's sake. For my mirror I'm grabbing Jessie (oldstable), Stretch (stable), Buster (testing) and Sid (unstable) in AMD64 and i386 flavors using debmirror. This takes up around 600GB as of November 2018 so a 1TB hard drive or SSD should do the job. Personally I just went with a dual bay 3.5" USB 3 enclosure and a couple of 2TB drives with BTRFS RAID1.

The Debian distribution that is packaged for the ROC-RK3328-CC board has an empty fstab by default. After plugging in your USB drive, formatting it (if needed) and finding the UUID (ls -lah /dev/disks/by-UUID) add an entry like so:

UUID=your UUID here /mnt/usb btrfs nofail 0 2

The nofail option allows the board to continue booting if the drive is not present for some reason. Btrfs is optional, ext4 or xfs will work fine.

Best practices dictate setting up a separate user for the mirror:

groupadd mirror

useradd -d /var/mirror -g mirror

mkdir /mnt/usb/mirror

chown -R mirror:mirror /mnt/usb/mirror

ln -s /mnt/usb/mirror /var/mirror

Next add some packages:

apt-get install nginx ed screen xz-utils debmirror debian-keyring

Debmirror needs access to the GPG keys to verify the source of the packages, so you'll need to import them into the mirror account:

su - mirror

gpg --no-default-keyring --keyring trustedkeys.gpg --import /usr/share/keyrings/debian-archive-keyring.gpg

Every once in a while the keys will need updating (particularly when a new version of Debian is released), updating the keys is the same as the initial installation:

gpg --no-default-keyring --keyring trustedkeys.gpg --import /usr/share/keyrings/debian-archive-keyring.gpg

Next up we need to script out the mirror update process. After some tinkering and searching mine ended up looking like this:

#!/bin/sh

FTP=ftp.us.debian.org

DEST=/mnt/usb/mirror/debian

VERSIONS=jessie,stretch,testing,sid

ARCH=amd64,i386

debmirror ${DEST} --host=${FTP} --root=/debian --dist=${VERSIONS} -section=main,contrib,non-free,main/debian-installer --i18 --arch=${ARCH} --passive --cleanup $VERBOSE

and I saved it to /usr/local/bin/mirror.sh

It's now time to do the initial sync, this can take a while so run it in screen session:

screen su mirror -c "/usr/local/bin/mirror.sh"

After that's finished (probably several hours later), we'll make the mirror accessible via nginx. In my case I had to uncomment the following line in /etc/nginx/nginx.conf:

server_names_hash_bucket_size 64;

as for some reason it was having a cow over the length of my hostname.

I created a vhost file at /etc/nginx/sites-available/000-yourhost.yourdomain.com:

server {

listen 80;

server_name wren.buttonhost.net www.wren.buttonhost.net;

access_log /var/log/nginx/wren.buttonhost.net-access.log;

error_log /var/log/nginx/wren.buttonhost.net-error.log;

location / {

root /var/mirror/;

autoindex on;

}

}

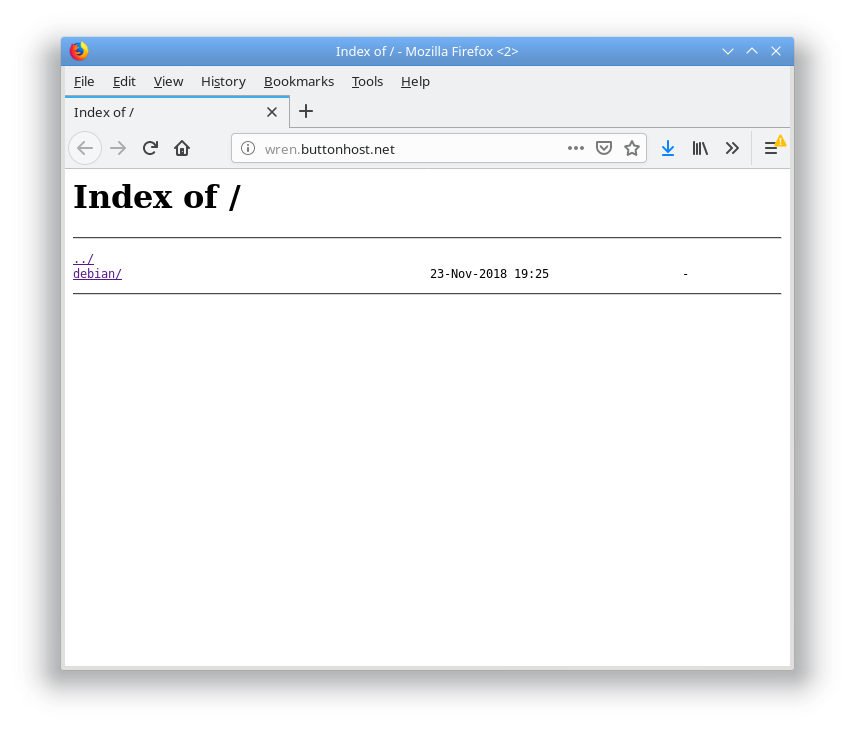

I have the DNS assigned on my DNS server/gateway but you'll need to figure out how to deal with that. Just make sure yourhost.yourdomain.com points at this machine's IP.

Then link this file in the /etc/nginx/sites-enabled directory:

cd /etc/nginx/sites-enabled

ln -s ../sites/available/000-yourhost.yourdomain.com 000-yourhost.yourdomain.com.cfg

My machine is called wren.buttonhost.net, please change the name in the configuration file to your own machine's vhost name!

and restart nginx:

systemctl nginx restart

Afterwards you should be able to navigate to that host in a web browser and see your mirror.

The last step is to automate the update process via cron, here in /etc/cron.d/debmirror:

# sync Debian mirrors three times a week

30 5 * * 1,3,5 mirror /usr/local/bin/mirror.sh

You now have a Debian mirror that you can fit in your pocket and run off a lithium battery pack if needed. Why would you need that? I don't know, why wouldn't you? Outside of the parts about the fstab, what packages to install and symlinking the /var/mirror directory to the USB drive the rest of this should work on any Debian machine regardless of architecture.

The 2019 Trackball Revival

In an attempt to head off some elbow tendon pain I started looking into a different mouse for the office. The suspect mouse is the Dell laser model that came with my work machine a few years ago. At home I use a much more ergonomic Logitech G400 that doesn't seem to give me issues. The Dell has very extreme angle on the front so it's a very unnatural fit for my hand. Maybe it works for people with smaller hands? It doesn't help that the DPI settings seem to be messed up and it will only swap between turtle in molasses and rabbit on meth. It's pretty hard to use in either of those modes. While I'm guilty of keyboard snobbery I'm generally OK with whatever mouse I can find that is comfortable. The Microsoft D66 and Logitech MX518/G400 are usually my go to as they fit my hand well, are relatively inexpensive, don't require any third party software and are basically everywhere. I guess it's time that I started getting into other relatively obscure input devices.

I haven't used a trackball since the late 90s. Back then I didn't care for the trackball as scroll wheels were becoming big and more and more pieces of software were utilizing them. At the time there really weren't any trackballs on the market with a scroll wheel. Optical mice were also starting to come on the scene and were popular among gaming enthusiasts. At the time trackballs didn't have scroll wheels which was a real sore point in early FPS games. We had a Logitech Marble back then, which is still available new BTW. It's a fine track ball but lacking the scroll wheel was a let down back then.

Now a days scrolls wheels are available on the few trackballs still on the market in the US (they're apparently still big in Japan and that's not a joke). There aren't many available as they've fallen out of favor with most people. Logitech makes a couple and there are some sellers online that sell Japanese import Elecoms. I went with a Logitech M570 as the MX Ergo was quite expensive. Plus I'm generally not a fan of non-user replaceable batteries and the rubberized coating the MX Ergo had. While I had used a Logitech Marble years ago I'd never used a thumb ball type trackball period so this was a first. So far I can say the M570 is a pleasure to use. It's only been a little over a week but I'm already comfortable with it. As far as gaming goes I've only managed to try a little Team Fortress 2 with it and I can see a trackball being a huge advantage in FPS games once you adjust. To be honest I'm not sure why these things are more popular than they are with gamers. The M570 is a tad small in the width department so my fingers tend to fall off the right hand side of the device but otherwise it fits my had well. I really don't care for the wireless part, especially since it's not Bluetooth and requires a small USB receiver, but it at least runs on a single AA that's user replaceable. Wireless trackballs don't make a lot of sense if you ask me. They don't move and aren't something you're going to use across the room, but it's what the kids like so whatever.

I think the key to preventing RSI is to stop the repetitive part of it so I'm not ditching the mouse completely. Changing position and devices will help in that direction quite a bit. I'm may be on eBay looking at some older models of trackballs too as most have been relegated to the dust bin of history, although some models can be quite pricey as they have a bit of a cult following. Elecom gets high marks on the new market but they can be pricey as they have to be imported. Really, if you want to one out I'd say give the M570 or a Trackman Marble a shake. You won't be out but for about $20-25 or so and if you like it there are other higher-end options out there. As an added bonus ne'er-do-wells won't really be able to mess you machine and I've already baffled a few people when I had to work in an open office setting this week ...

Unexpected Snow Day

Today was one of those days where a dusting to 1" turned into a bit more snow for Boone. All of these photos were taken through out the day with the Fuji x100s.

Ran into some folks out and about doing or wearing interesting things. This dog was pretty excited to get a move on.

Winter clothing can sometimes be interesting.

As far as I could tell this is like that thing where people stack stuff on their cat but with a person.

2018 Holiday Random Print Mail Bombardment

Like getting random stuff in the mail? This year I'm going to mail out some prints to people who want them. They'll be either 4" x 6" or 5" x 7" depending on the paper I have laying around at the time. The image will be mostly random, could be pertinent to how we know each other or just whatever the heck I feel like printing. All of them will be photos I have taken.

If you want to participate please send the address of your mail hole or receptacle to leander@one-button.org.

In person delivery is an option if you are with in my laziness radius.

Japanese Input on Fedora KDE

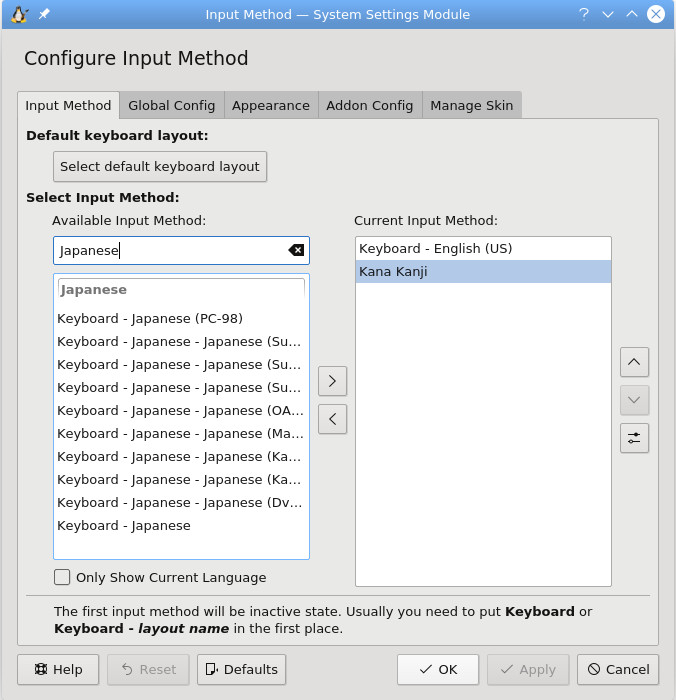

I've been taking a Japanese class over the last semester so I've had a need for Japanese input on laptops. I've been running Fedora KDE on my portable machines lately and I had no trouble finding documentation on changing up input methods on Fedora GNOME but there was little out there on the KDE spin. Some of the Kubuntu documentation got me in the right direction though.

The method I found for kata and kanji input uses fcitx, which have packages in the Fedora repos:

sudo dnf install fcitx-kkc kcm-fcitx

After those have installed add the Fcitx utility to the Autostart items in Plasma 5. Also, add the fcitx input modifiers in /etc/profile.d/fcitx.sh:

export XMODIFIERS="@im-fcitx"

export QT_IM_MODULE=fcitx

export GTK_IM_MODULE=fcitx

Restart the machine and open up the Fcitx Input Method configuration, you'll want to add the Japanese Kana Kanji as a secondary input method. If you don't see it come up in a search uncheck the "Only Show Current Language" option.

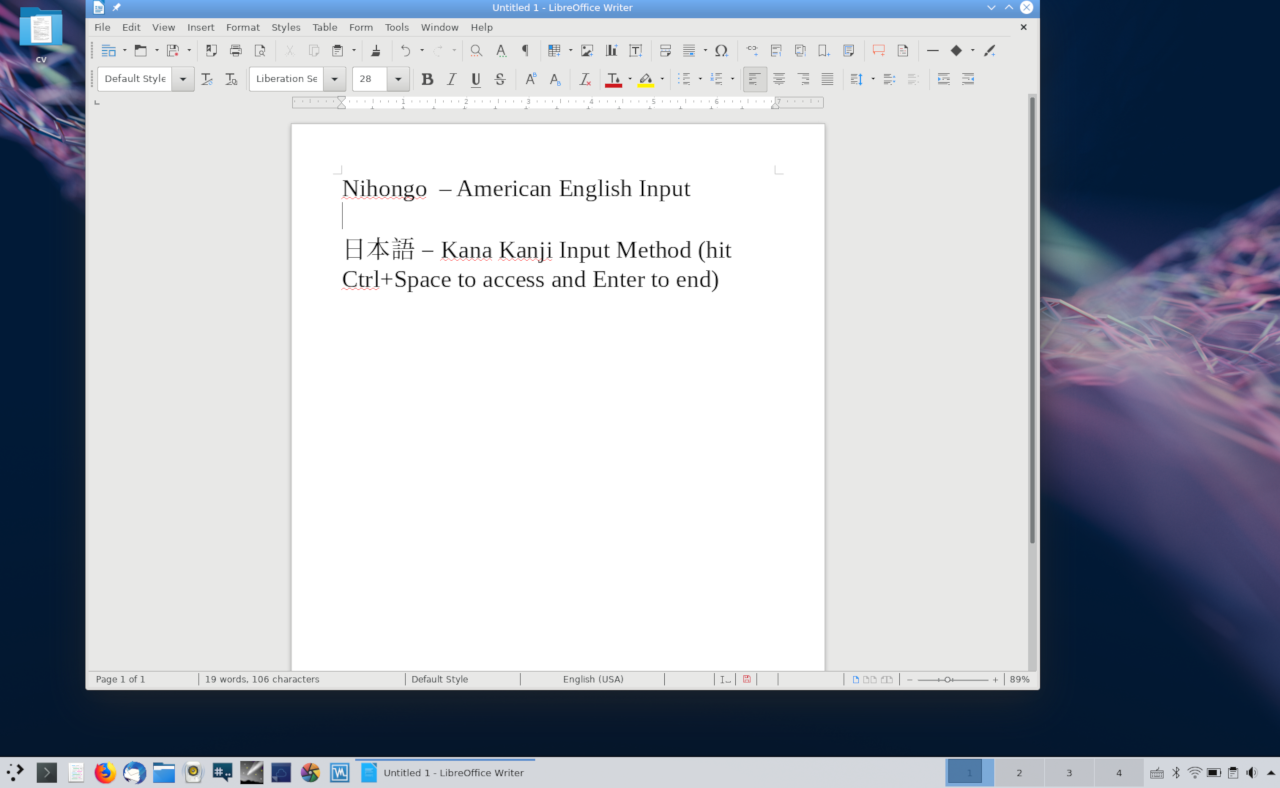

Now you should be able to hit Crtl+Space in any text input field and replace Romanji with Kana or Kanji characters. Additional presses of the space bar will cylce through different characters for the same sound. So you can have にほんご or 日本語 for example. Pressing Crtl + Space exists the Kana Kanji input mode. Pressing return after a typing a word in kana or kanji finishes the slection and allows you to move on to the next word. Very useful and no swapping keyboard layouts!

Autumn 2018

Just a few photos from the last couple of month around Boone, NC. All taken with either the x100s or X-T2.

IBM Model M 1393464 Restore

This was originally posted some time ago on Deskthority. Hosting it here for posterity's sake.

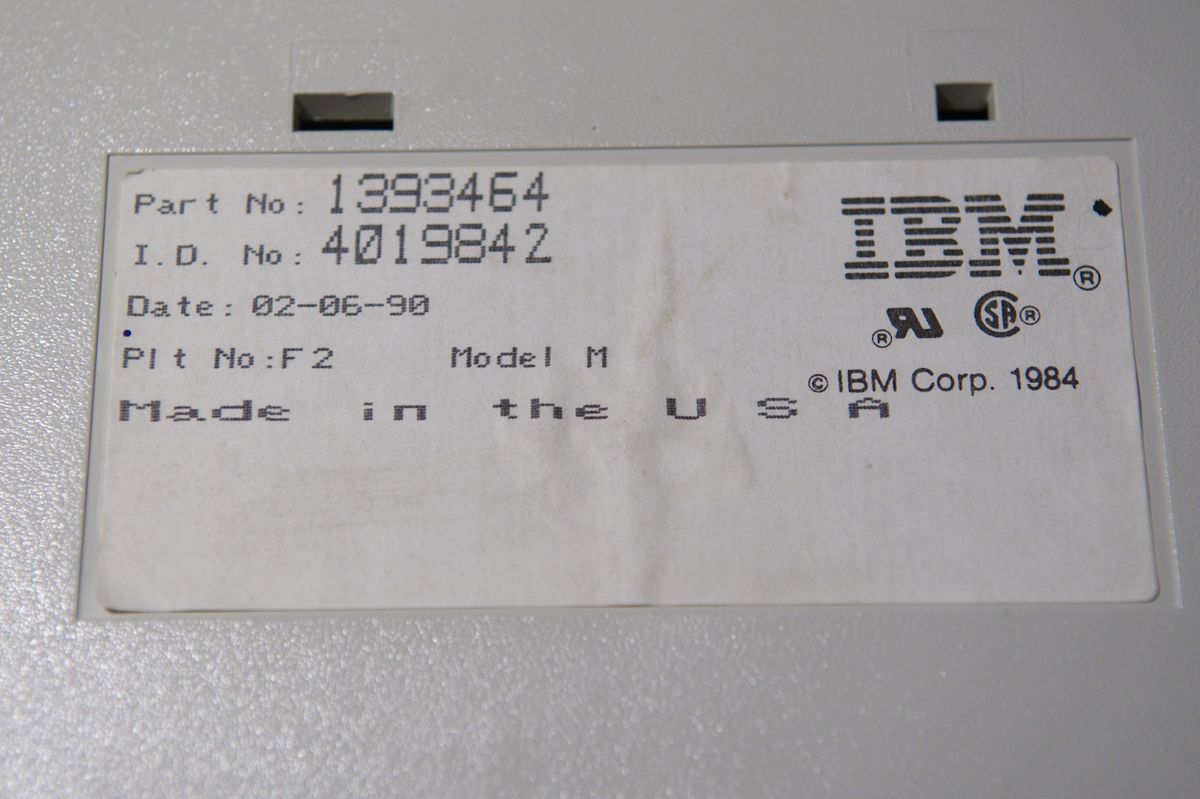

Recently I stumbled on a 1393464 Model M off eBay for a decent price. This was a model with custom keycaps made for the American Airlines reservation system. Otherwise it's a normal 2nd generation Model M from 1990. I just think the differently printed keycaps look neat.

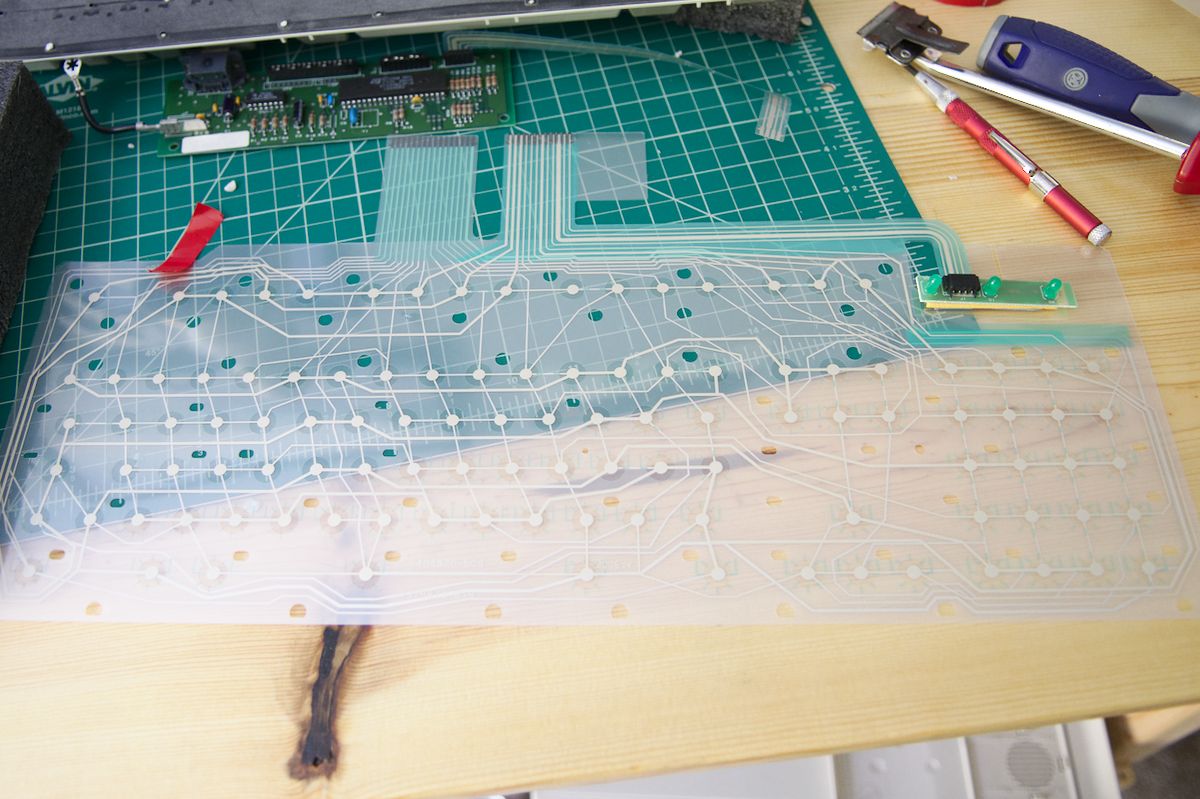

It arrived and I found that some of the keys were non-functional and I tracked it back to a dead line in the membrane. Since I'd never fully deconstructed a Model M before I decided to give it a go. Usually I just do the screw mod when the rivets break. Ordered the $10 membrane from Unicomp and it arrived Saturday. Just tonight I go the board all the way back together and it works! Typing this post on it right now. Below are some photos are comments on the process. It was more challenging than I thought it would be, getting the springs and flappers to stay in place while you re-attach the membrane and back plate is kind of tricky ...

Model information, this particular unit was built on Feb 06 1990

Beige/cream barrel plate, this is a new one to me. I've only ever seen black ones ...

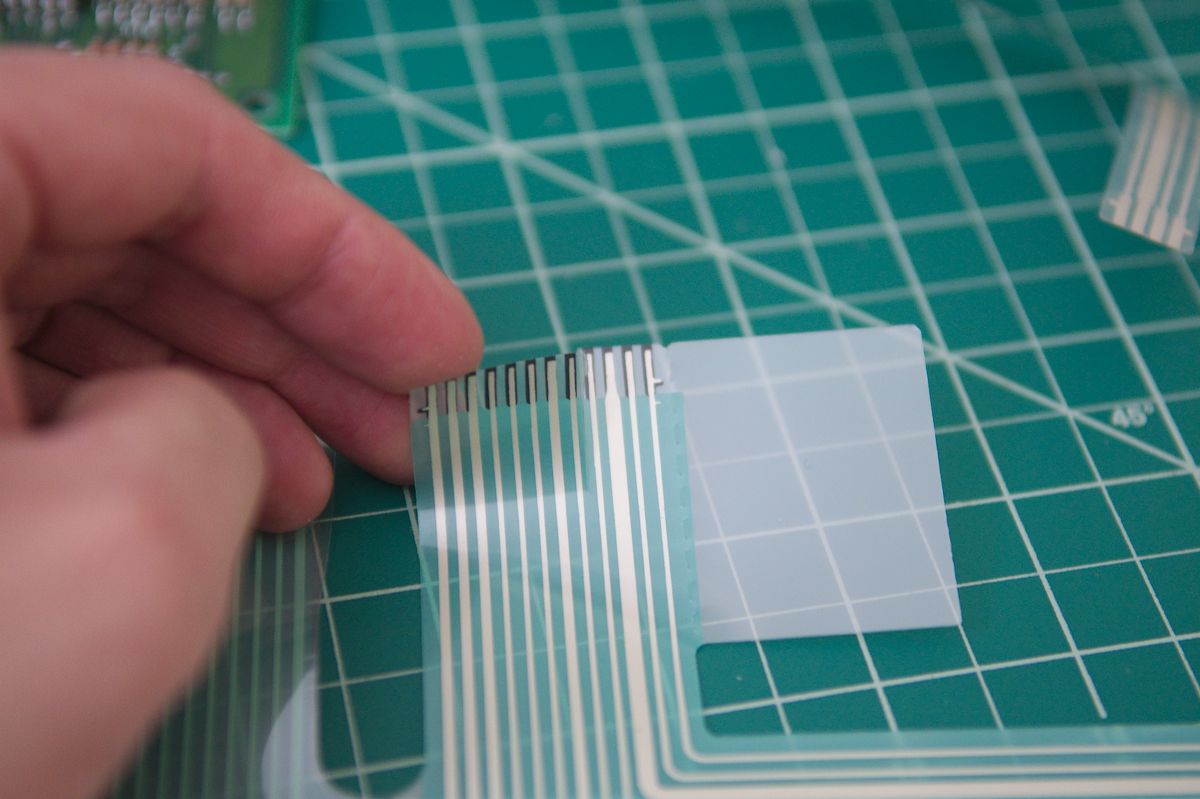

Just a quick note on the new membranes from Unicomp: if you have an older M you're resotoring you'll need to trim off the rightmost four lines from the smaller ribbon cable. These membranes work with the older Ms but have the built-in lines for the LEDs on the new models. I just used a razor blade and some patience.

New membrane pre-install...

Keycaps back in and ready to be tested!

All back together and ready to go. All in all not too bad of an experience and I found some threads on here helpful. Just thought I'd share the journey. Not sure how rare or special this particular Model M as it just showed up in my running eBay search. Found some info on the Clicky Keyboards site but that's about it. I think the membrane went bad due to some liquid exposure as it looks like there was some dried liquid in between the sheets. Anyway, should be good for another 28 years now I reckon.